By Michal Clements, W’84, WG’89

Conversational Context

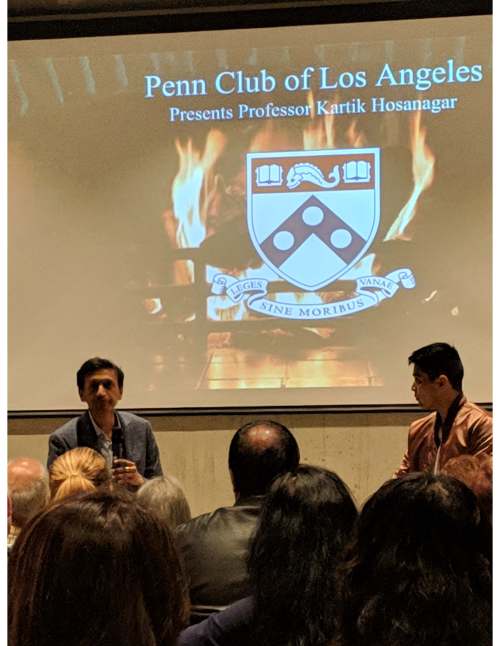

On April 4, 2019, forty Penn alumni, family, friends and work colleagues came together In the offices of Silicon beach based content targeting firm Zefr to gain insight into machine learning and algorithms. The fireside chat featured Wharton Professor Kartik Hosanagar, author of the just-released “A Human’s Guide to Machine Intelligence: How Algorithms are Shaping Our Lives and How We can Stay in Control” and was moderated by Bing Chen C’09.

The evening began with welcomes from Penn Club of Los Angeles President, Omid Shokoufandeh W’09 and host Zefr’s Product Manager Data Science Rebecka Zavaleta, C’13.

Attendees included Penn alumni and local colleagues who currently work directly in machine learning and artificial intelligence as well as alumni of all ages who were interested to learn more about these topics, including a Penn graduate from the class of 1957 and 1969.

Topics Explored

- The trend is towards reinforcement learning where the AI creates its own data vs. supervised learning where a training data set is used

- We all have subconscious biases and algorithms learn from data based on human decisions, therefore the algorithms develop and reflect those biases

- There are many examples of algorithm failures, whether the algorithms are ruled based (i.e., from the programmer) or reinforcement learning based

- While diversity in the team developing algorithms is desirable, many AI teams are quite small in size (i.e., with three or four people), and it’s not realistic to have all diversity represented within them (even if the talent pool were a perfect reflection of diversity)

- Case example: Amazon discovered that their resume screening algorithm had a gender bias which reflected the gender bias in the underlying data. While “almost no one” is testing for bias, Amazon was and moved to correct this issue

- Case example: a Microsoft chatbot named XiaoIce “works” with forty million followers in China (a place where there are many rules on what to not say in social media), while a similar Microsoft chatbot named Tay failed spectacularly in the US. This example is explored in the book’s opening

- Humorous case example of gaming the system: given our notorious LA traffic, and widespread use of Waze and Google Maps, one audience member regularly reports (falsely) slowdowns in his local neighborhood to prevent traffic from being routed through the area, thereby creating congestion

Predictions (some already in existence)

- Machines will be able to detect emotions and use that information

- Expect an exponential increase in capabilities from AI in our lifetime. Examples on the near term and current horizons are driverless cars and smart cities (at the combination of AI and IOT)

- We have and will have AI that is creative (e.g., art, music)

Ideas to Address the Biases and Navigate an Algorithmic World

- In the book, Prof. Hosanagar presents “A Bill of Rights” to address some of the dangers and challenges around algorithms. Some of the solutions include:

- Transparency, particularly in socially critical settings

- Human in the Loop

- Auditing the algorithm

All of us are impacted daily by algorithms, and I hope our Penn alumni who are lifelong learners will educate themselves, and will also have the opportunity to see and hear from Professor Hosanagar on this topic.